The rise of AI agents has sparked a familiar narrative in enterprise technology. As these systems become more capable of reasoning, planning and acting across workflows, there is a growing assumption that they will simplify, or even eliminate, layers of enterprise software.

Gartner has even predicted that by 2028, 33% of enterprise software applications will incorporate agentic AI capabilities — up from less than 1% in 2024 — and agentic AI will make at least 15% of day-to-day work decisions autonomously.

It raises an interesting question: If an agent can decide what needs to happen and execute it, why maintain complex infrastructure underneath?

But as organizations move beyond experimentation and into production, a different reality is emerging. AI agents are not replacing enterprise infrastructure. They are exposing just how much it still matters. This is particularly visible in how enterprises are thinking about integration layers, the most critical points that ultimately determine whether AI-driven decisions can be executed reliably.

Building integrations is easy. Running them is hard.

AI has genuinely made it easier to generate integrations. Ask a large language model to write the code connecting two systems and it will often produce something workable in minutes. This is real progress, and it has lowered the barrier to getting started.

What AI hasn’t changed is what happens next. That integration still needs to be hosted somewhere secure. It needs to be available around the clock, scalable enough to handle peak periods without running up enormous infrastructure costs, and resilient enough to handle the inevitable moments when a downstream system goes down, slows down, or starts rate-limiting requests. Someone, or something, needs to hold onto those messages, queue them appropriately, and push them through when the connection is restored.

This is where the gap between building and operating becomes clear. AI can help you create integrations, but it does not remove the operational burden of running them reliably in production.

That is precisely why the ‘as-a-service’ element of enterprise platforms matters more in an agentic world, not less. It is not just about the software. It is about the security certifications, the uptime guarantees, the monitoring, and the commercial accountability. If something breaks in a production environment, enterprises need more than code. They need systems designed to handle failure.

Where agentic AI meets reality

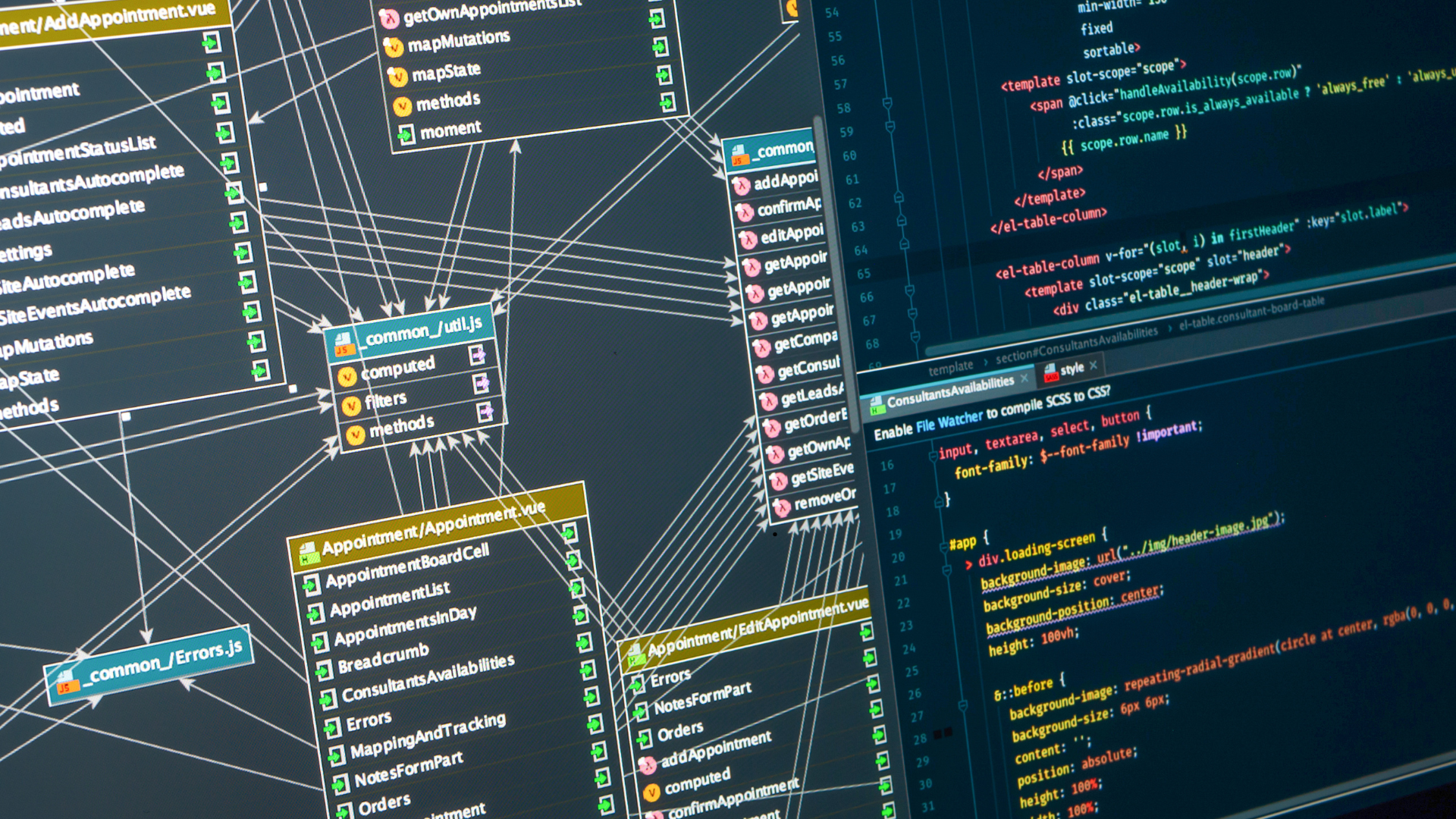

The gap between reasoning and execution becomes most visible in real-world workflows. The more autonomous agents an enterprise deploys, the more interactions they generate across systems. Every decision an agent makes triggers queries, API calls and actions that must be executed correctly. APIs are, quite simply, the pillars on which agentic systems are built.

Consider a typical e-commerce transaction. A single order touches multiple systems. Inventory must be checked and updated. Payment must be processed. Fulfillment must be triggered. Financial systems must be updated. Each step depends on the others being completed in the correct order.

If an AI agent attempts to orchestrate this process directly, even small inconsistencies can create cascading problems. An inventory update might succeed in one system but fail in another. Payment might be processed before stock is confirmed. The result can be overselling, delayed fulfillment, or mismatched records across systems.

Traditional enterprise architectures handle this complexity through deterministic workflows. Processes are designed to execute in a specific sequence, with clear rules for handling failure. Systems store messages, retry operations, and ensure that data remains consistent across platforms. This is what allows businesses to operate reliably at scale.

AI agents, by contrast, are not inherently deterministic. They may approach the same task in slightly different ways depending on context or available data. That flexibility is powerful for analysis and decision-making, but it introduces risk when applied directly to execution.

This is where the distinction between reasoning and execution becomes critical. Agents are highly effective at gathering data, identifying patterns and recommending what should happen next. But executing those decisions across multiple systems requires infrastructure designed for consistency, control and repeatability.

The answer is not to remove agents from these workflows. It is to be clear about their role. Agents can reason, analyze and initiate actions. But the execution of those actions must still run through systems designed to handle complexity, manage failure, and ensure that operations remain reliable.

AI as a reasoning layer, not an execution engine

A more useful way to think about enterprise AI is as a layered architecture. AI becomes a reasoning capability that sits above the operational stack. It evaluates data, generates insights, and determines what actions should be taken. Beneath it, enterprise systems handle execution, ensuring that those actions are carried out reliably across the business.

This separation is not a limitation. It is what allows AI to be applied safely at scale. It also reinforces the importance of data. AI systems are only as effective as the data they receive. If an agent cannot access the right data at the right time, it will either fail to act or produce incorrect outcomes. In some cases, it may even hallucinate results rather than acknowledge missing information.

Ensuring that agents have access to accurate, timely and well-structured data is therefore critical. That responsibility sits firmly within the enterprise infrastructure layer.

How to architect for agentic AI

Looking ahead, enterprise architectures are unlikely to undergo a complete reset. Most organizations will continue to operate layered environments that separate user interfaces, application logic, and back-office systems.

What will evolve is how those layers are used. As AI agents become more prevalent, the focus will shift toward making systems more accessible to machine-driven users. That means exposing data more effectively, standardizing how actions are triggered, and ensuring that workflows can be executed reliably regardless of where decisions originate.

It also means placing greater emphasis on the connective tissue between systems. Not because it is new, but because it becomes more visible as the volume of interactions increases. The systems that connect applications and manage workflows will become the bridge between AI-driven decisions and real-world execution.

For a business to safely hand over the keys to an autonomous agent, they first need to solve the unglamorous problem: clean, connected, standardized data.

To support agentic AI effectively, enterprises need to think in terms of layered responsibility. AI systems should be used to reason over data, identify opportunities, and initiate actions. But those actions must be executed through infrastructure designed for reliability and control.

In practice, this means ensuring that integration layers expose clean, well-governed APIs, that workflows are orchestrated in a deterministic and auditable way, and that systems are designed to handle failure as a standard condition, not an exception.

For a business to safely hand over the keys to an autonomous agent, they first need to solve the unglamorous problem: clean, connected, standardized data.

What is changing now is that integration is no longer just about moving data, but about governed orchestration. By adopting standards such as OnX, UCP and AMP, organizations can expose their operations in an agent-native way that keeps those agents within defined boundaries. This allows enterprises to make systems accessible to AI without sacrificing control or reliability.

It also means separating decision-making from execution. Agents can determine what should happen, but enterprise infrastructure must remain responsible for how it happens.

Critically, this approach does not require a wholesale rebuild of existing systems. It requires ensuring that underlying data is trustworthy and that infrastructure adheres to established security and governance standards such as SOC 2, ISO 27001, and emerging frameworks like ISO 42001, so that both systems and their outputs can be relied upon.

In the future, enterprises won’t be looking to replace infrastructure, but to build infrastructure that can support, govern and reliably execute the decisions AI agents make.

Be First to Comment