TLDR

- AI governance is no longer an IT issue — risks span legal, cybersecurity, data and compliance, requiring cross-functional ownership.

- Companies are deploying AI at an increasing clip, but governance is lagging — only about 21% have mature frameworks despite rapid adoption.

- The priority is scaling governance without slowing innovation — build on existing risk frameworks and focus on trust, or adoption will stall.

Artificial intelligence is forcing companies to rethink technology governance in ways not seen during previous computing revolutions, according to Cliff Goss, Deloitte’s regulatory, risk and forensic AI leader.

Unlike earlier waves of technology such as cloud computing or the internet boom − where oversight often sat largely within IT − AI introduces risks that span multiple parts of an organization, from cybersecurity and legal teams to data governance and compliance.

That complexity makes AI governance fundamentally different from previous technology transitions.

“What’s different about AI is that AI risk is a transverse risk,” Goss said in an interview with The AI Innovator. “There’s no single person or single organization within an enterprise that can really own all of the risks.”

During earlier technology shifts, responsibility often fell within a single technical group. AI, by contrast, creates risks that extend across disciplines including data privacy, intellectual property, regulatory compliance, model reliability and cybersecurity.

Goss cited the following risks: the risk that data could be leaked from the organization and the ethics surrounding that data; risks around the model itself in terms of hallucinations, unintended bias and the like; cybersecurity risks; legal risks for issues such as intellectual property rights; and so on.

As a result, managing AI requires collaboration among groups that historically may have had little reason to work together, he added. In the past, people would get together to discuss a use case as part of their governance efforts. But “that’s not scalable,” Goss said.

AI has a lot more moving parts that need monitoring, especially as companies deploy “hundreds or (even) thousands of gen AI solutions and agents,” he said. A small committee structure “doesn’t work.”

No need to start from scratch

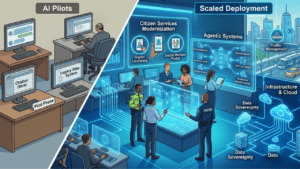

Deloitte’s latest “State of AI in the Enterprise” report shows this dichotomy: the pace of AI deployment is rising rapidly with nearly three in four companies planning to deploy agentic AI systems within two years, yet only about 21% report having mature governance frameworks in place to manage those systems.

The challenge becomes, “how do you actually operationalize this when you have that much scale?” Goss asked. The key is to do “the risk management work that has to get done, but in a way that doesn’t slow down people who are trying to leverage new technologies, be innovative.”

The good news is that he doesn’t believe legacy systems have to be completely replaced. “There’s no reason why anyone really should be starting from scratch,” he said. “We see a lot of different organizations lean into areas that are most mature for them.”

For example, most financial institutions have a model risk management program that is on the “easier side to tailor” for gen AI, Goss added. For other industries without that structure, a good place to start is to look at where risk management does exist and leverage the framework.

“What you want to do is effectively look and say, ‘how are we addressing those risks? What’s the distance between what we’re doing now and what we need to do? Who should do it and how do we do it effectively?’”

“No one should be throwing away effective programs,” Goss said. “We should just be making them better.”

Advice for business leaders

As the corporate world moves into agentic AI, Goss said governance must reflect this transition. “It’s not so much a change as an incremental build-upon because gen AI can be the engine within agent and multi-agentic programs.”

However, agents could give rise to new risks that aren’t contemplated in a standalone gen AI solution that does one task. He said many institutions are revisiting their risk management, governance and compliance programs that were already primed for gen AI and are revising them for agentic AI.

All this work is seen as essential by companies because governance guardrails help build trust in AI systems. “People want to move fast, but they also know that people will not use this technology … if people do not trust the outcomes,” he said. Without trust, “you can invest all you want − people aren’t going to use it.”

Another factor is the changing AI regulations around the world. Goss said companies should still set up their AI risk management programs by focusing on a few core issues. “What is your risk appetite around this technology based on the current regulations that exist,” as well as looking ahead to what might be coming and keeping track of changes, he said.

Goss has three tips for business leaders:

- Build cross-functional governance structures that bring together stakeholders from risk, legal, cybersecurity, compliance and technology teams. Get buy-in on the effort overall.

- Think through how to operationalize risk management efforts in the long run. Go beyond the theoretical and see whether the framework is effective in monitoring thousands of AI agents.

- Don’t overlook AI literacy. People from across the enterprise need to understand not only AI’s potential but also its risks and limitations.

Companies should get started. “I would say a lot of work needs to be done on AI governance still,” Goss said. “Even at the most sophisticated and mature organizations, a lot has to be done.”