TLDR

- Boards are shifting from simply monitoring management to actively shaping AI strategy as artificial intelligence becomes embedded across core business operations, according to Lee Henderson, EY Americas Center for Board Matters leader.

- AI governance is becoming a major board-level issue, with concerns over hallucinations, bias and accountability pushing companies to rethink board expertise, oversight processes and even the use of AI ‘companions’ in the boardroom.

- Despite fears of widespread AI-driven job losses, Henderson argues companies still need entry-level workers and should redesign jobs around human-AI collaboration rather than fire junior talent.

Boards are entering unfamiliar territory as artificial intelligence shifts from a back-office tool to a core driver of strategy, risk and hiring decisions. That shift should make directors rethink how they oversee companies.

Compared with past tech revolutions, “it’s the biggest thing we’ve ever seen, so we have to approach it differently,” said Lee Henderson, EY Americas Center for Board Matters leader, in an interview with The AI Innovator.

Board members can’t think of AI as just a software tool anymore. “Generative AI is like a partner; it’s like a co-worker,” he added. “When you have this partner, you have to understand what are the handoffs that happen because every handoff has always created risk.”

As AI becomes more like a co-worker, it creates new governance challenges for boards. Decisions are no longer confined to human actors. AI systems can make recommendations, automate workflows and, in some cases, act with limited autonomy. That raises questions about accountability, oversight and risk tolerance.

The good news is most boards would not have to start from zero. “Overall, I think boards are ready,” Henderson said. “Boards know that they have a fiduciary duty … and that hasn’t really changed with AI.”

But what has changed is how that duty is carried out.

Historically, boards focused on monitoring management, but that model is changing. “With AI, there’s a shift from monitoring to shaping strategy” as the view shifts from siloed AI to AI embedded everywhere, Henderson said.

That shift also reflects broader changes in how companies are using AI. Early deployments focused on productivity – automating reports, summarizing documents or assisting with coding. But those gains are becoming table stakes.

According to a recent EY report, “How boards can lead in a world remade by AI,” many companies are now moving toward redesigning core processes and business models around AI, rather than layering tools on top of existing workflows.

Board diversity – and an AI companion

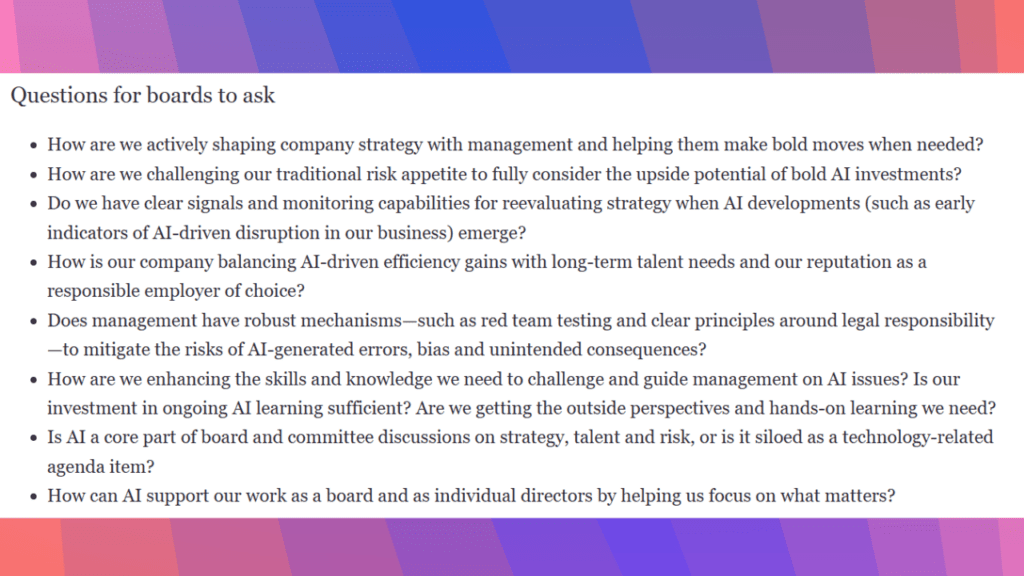

Boards must pivot as well. Traditional board briefings are no longer sufficient to keep pace with technology that is moving at an unprecedented rate.

One way to deepen the board’s knowhow is to conduct experiential learning, such as tabletop exercises and live demonstrations. Henderson cited an example where a management team walked a board through a contract review process, comparing the old manual method to a new AI-integrated version. The exercise allowed directors to see exactly where technology makes decisions and where human accountability remains.

“When was the last time a board would want to go through the depths of a contract review process?” he said. This hands-on exercise “allowed them to say, ‘we can actually ask the right questions … because now we understand how that technology is being used.’”

A deeper understanding is critical especially as AI introduces new risks to organizations. Companies have been flagging AI-related risks in regulatory filings, including hallucinations and misinformation, according to the EY report. Boards are expected to ensure that management has safeguards in place, from testing protocols to clear lines of accountability.

“One in five Fortune 100 companies … disclosed that hallucination, bias and inaccuracies are a significant risk,” Henderson said. “That’s crazy when you think about it.”

Another way to better equip boards to handle risks is to add diverse talent. Many boards were built around financial, legal or operational expertise, but that mix may no longer be sufficient, he said. Boards could use members with more tech fluency. Diversity, in this context, means a wider range of skills and perspectives that can help directors ask better questions.

It can even mean creating an ‘AI companion’ for the boardroom to help directors in their governance duties, one that’s always “thinking, that’s processing, that’s giving feedback,” Henderson said.

Only 1% of layoffs due to AI replacement

The workforce is another lever for boards to pull. “Boards are starting to realize that you just can’t separate AI from your talent strategy. It’s now absolutely inseparable,” Henderson said. But instead of replacing entry-level workers with AI, change their duties to be more skilled in working with AI.

“Companies still need entry-level workers – just not for the same tasks,” according to EY’s report. “The next generation, especially those who have been exploring AI since it first became widely available, brings fresh perspectives and a deep understanding of digital tools that can help organizations make the most of AI itself.”

That’s well and good for Henderson. “Eliminating junior staff is a long-term risk,” he said. “You and I grew up as entry-level workers. That’s where we formed our judgment. That’s where we formed our leadership skills. That’s where we formed our context.”

The solution is “not to reduce the entry-level people,” Henderson added. Rather, “it’s to redesign how they work better with AI as co-workers.”

IBM already figured this out. Last month, it announced that it was tripling hiring of U.S. entry-level workers and changing their roles for an “AI-first workplace,” according to a company blog post. “If we don’t continue to invest in entry-level hires, what happens in three to five years?” said Chief HR Officer Nickle LaMoreaux. “There’s no pipeline; the well simply dries up.”

What about tech companies that have announced mass layoffs related to AI, including Meta, Amazon, Oracle and Block? It doesn’t reflect the full picture. Henderson cited what he described as a “shocking” Gartner survey revealing the actual percentage of companies replacing workers with AI.

Only 1% of layoffs in the first half of 2025 were due to AI increasing employees’ productivity, the survey said.