AWS is expanding its push into custom AI chips, betting that tighter control over silicon, servers and data centers will help it offer customers lower inference costs as demand for AI booms.

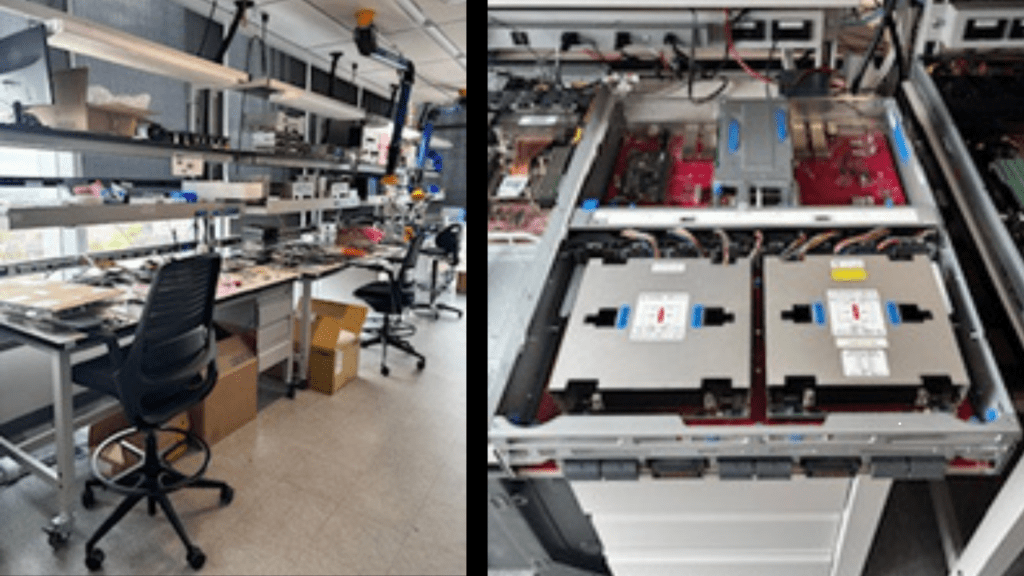

During a recent visit to the AWS AI Chips Lab in Austin, Tex., executives described a vertically integrated approach that spans chip design, system assembly and fleet operations – an effort aimed at improving performance while lowering the cost of running large-scale AI workloads.

AWS’ strategy centers on four families of chips: Nitro, which offloads networking, storage and security functions; Graviton, AWS’ Arm-based CPU; Trainium, its machine learning accelerator designed for training and inference; and Inferentia, which is optimized for inference workloads.

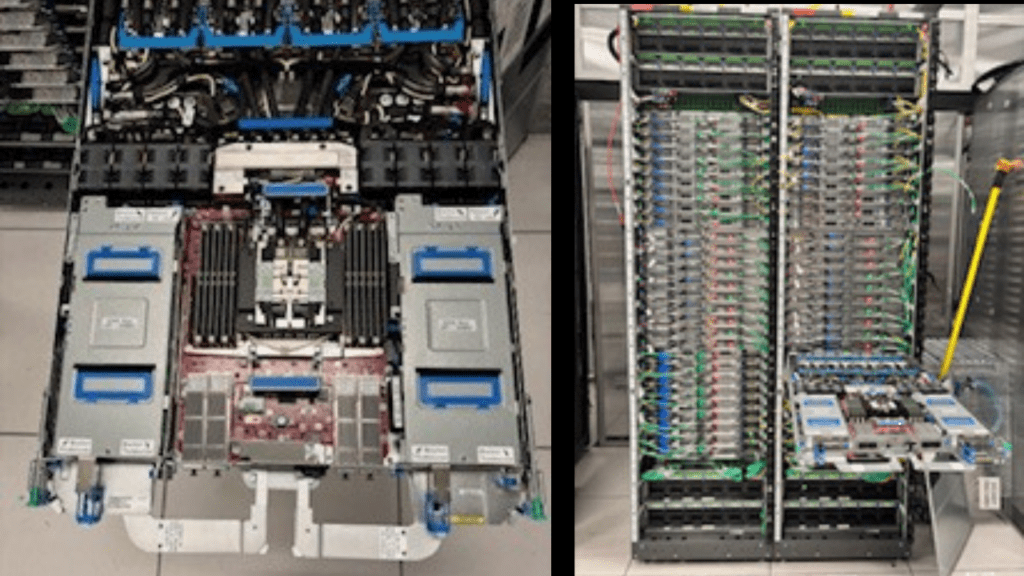

These components are increasingly being combined into tightly integrated systems, including what AWS calls “compute sleds,” where multiple chips are optimized to work together inside a single server.

AWS executives said the approach is delivering measurable gains. The latest generation of Trainium chips offers roughly 30% to 40% better price performance compared with prior systems, driven by workload-specific design and tighter integration across components.

“We are very focused on what the customers are using them for,” said Mark Carroll, AWS director of engineering at the lab. “There’s no waste within this chip. It does factor in and contribute to actual cost performance.”

That focus on efficiency is becoming critical as AI costs surge. Training frontier models now requires massive clusters of accelerators, pushing cloud providers to rethink how infrastructure is built and operated.

Trainium3, in particular, is Amazon’s first 3-nanometer chip, which improves performance and energy efficiency and can lower the cost of running AI workloads over time.

It’s not a new endeavor for the team. “We started with an inference-only chip in 2018,” said Kris King, the lab’s director. “We’ve been doing this for many years. This isn’t our first rodeo.”

AWS’ custom silicon push reflects a broader industry shift. Companies including Google, Microsoft and Meta are all developing in-house chips to reduce dependence on Nvidia, whose GPUs dominate the AI market but come with high costs and limited supply.

Still, AWS is not abandoning third-party chips. Nvidia GPUs remain widely used across its cloud platform. But executives made clear that custom silicon is playing an increasingly central role. Graviton chips now account for more than half of the new compute capacity AWS is adding to its data centers, according to Ali Saidi, distinguished engineer at Amazon.

Adoption is also broad. Among AWS’ top 1,000 customers, 98% use Graviton in some capacity, powering services ranging from databases to analytics platforms, Saidi added.

That demand is reshaping how data centers are built. AWS is designing everything from chips to racks to entire facilities as a single system, optimizing for power, cooling and cost.

Power consumption has become a central constraint. AWS expects its power capacity for AI infrastructure to double in the coming years, reflecting the energy intensity of modern AI workloads.

Cooling is another pressure point. Instead of relying solely on traditional air cooling, AWS is increasingly turning to liquid cooling and other techniques to handle the heat generated by high-density AI servers – particularly those packed with GPUs or custom accelerators.

AWS’ approach also extends to supply chain control. While manufacturing is handled by partners such as TSMC, most chip design and development work is done in the U.S., including in Texas, Seattle and Cupertino, AWS said.

Once fabricated, chips are integrated into systems that AWS builds and deploys at scale across its global data center fleet.

That end-to-end control, executives said, is a key differentiator. By designing chips, boards, servers and data centers together, AWS can optimize for long-term operational costs rather than just upfront hardware performance.

For enterprise buyers, the pitch is straightforward: lower costs, better performance and tighter integration across services.

The broader implication is that AI infrastructure is no longer just about chips; it is about systems. As AI models grow larger and more complex, performance increasingly depends on how compute, memory and networking are orchestrated together. Amazon executives pointed to growing demand for CPUs like Graviton to handle orchestration tasks, such as managing memory access and processing outputs from AI models.

At the same time, custom networking technologies – including AWS’ Nitro system – are being used to reduce latency and improve data movement across distributed workloads.

All of this is happening as competition intensifies. Google continues to expand its Tensor Processing Units (TPUs), while Microsoft has introduced its Maia AI chip. Meta is developing custom AI silicon for its data centers, and xAI has signaled plans to build its own infrastructure.

Meanwhile, market leader Nvidia is pushing ahead with next-generation GPUs and integrated AI systems. Chip designer Arm is also exploring deeper moves into data center silicon, after capturing the vast majority of the mobile processor market.

AWS recently partnered with Cerebras Systems to integrate its wafer-scale inference chips into its infrastructure, aiming to deliver lower-latency AI performance.