As enterprises race to move AI from pilot to production, a quieter force is shaping who succeeds and who stalls: culture. A new report from MIT Technology Review Insights in partnership with Infosys Topaz argues that psychological safety – the ability to question, experiment and even fail without fear of career damage – is not a nice-to-have condition but a measurable driver of AI performance .

Based on a global survey of 500 senior executives conducted in mid-2025, some of the findings from “Creating psychological safety in the AI era” include the following:

- 83% of executives believe that a company culture that prioritizes psychological safety “measurably” improves the success of AI initiatives.

- 22% admit they hesitated to lead an AI project because they might be blamed if it doesn’t succeed.

- 48% of executives said their companies have a “moderate” degree of psychological safety, while 39% reported it as “high.”

In this email Q&A with The AI Innovator, Rafee Tarafdar, chief technology officer at Infosys, discusses how enterprises can operationalize psychological safety at scale, why leaders must model curiosity and transparency, and what he is seeing as companies move from AI augmentation to automation and, increasingly, agent-driven workflows.

The AI Innovator: The report says psychological safety is a measurable driver of AI outcomes. How do you imbue this soft skill in an enterprise at scale?

Rafee Tarafdar: While psychological safety can feel abstract, our research shows it is anything but. In fact, 83% of global business leaders say psychological safety measurably improves the success of AI initiatives, with nearly half describing the impact as direct.

It is critical that leaders operationalize psychological safety through clear experimentation frameworks and safe-to-fail environments where teams can test and refine AI without reputational risk. When reinforced through consistent leadership behaviors, structured communication and feedback loops, psychological safety becomes less an abstract cultural ideal and more an organizational capability (one that can be repeated, measured and scaled to accelerate AI adoption and outcomes).

One of the techniques is to define fixed and stretched goals. Stretched goals are aspirational in nature and for areas where there are lots of unknowns, give them the freedom to experiment and innovate.

What guidelines should enterprises adopt to communicate to employees and managers that they should not fear failure?

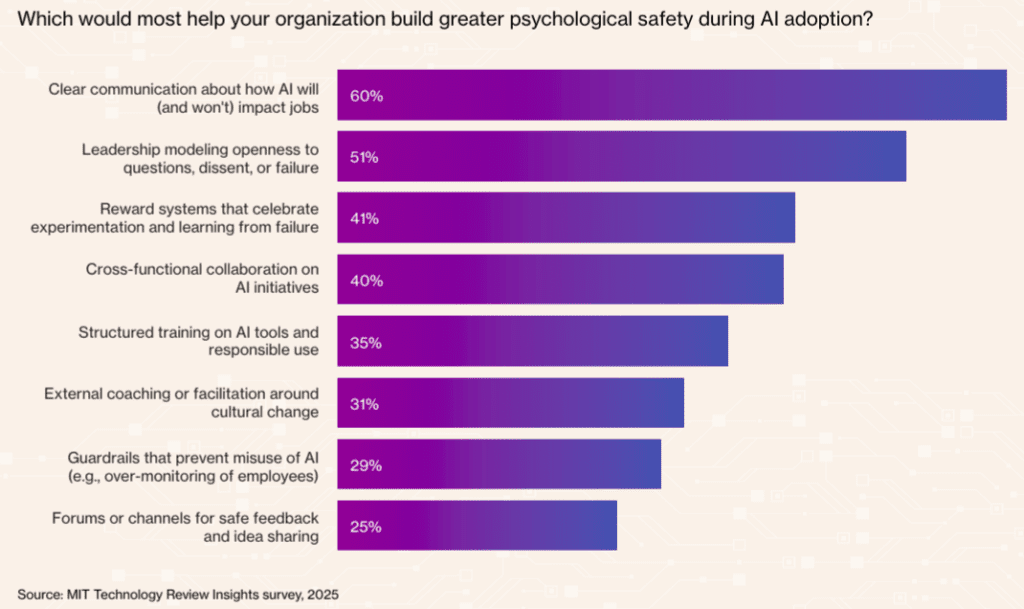

Leaders need to model the mindset they want to see by sharing lessons from failed pilots, rewarding curiosity and explicitly reinforcing that progress matters more than perfection. Our research found that over half of executives (51%) say leadership that openly models curiosity, dissent and learning from failure is one of the most effective ways to build psychological safety during AI adoption.

We’ve seen that storytelling is powerful. When people hear about others who took smart risks and learned from them, it sets a permission structure. Codifying that learning mindset in recognition systems and team routines helps make it stick beyond just comms.

How do you combat fears that deploying AI successfully will lead to job losses?

When it comes to deploying AI at scale, leaders must focus on clarity and capability. Sixty percent of business leaders say transparency about how AI will — and will not — impact jobs is the most important factor in building psychological safety.

It is imperative for leaders to provide AI tools to everyone in an organization to become more effective in their work, for the areas where the work will get automated provide a clear transition path through learning and upskilling programs to move into higher order roles and eventually build deeper domain expertise.

Infosys works with enterprises at different stages of AI maturity. At a high level, where are enterprises today when it comes to AI deployment? Are there geographic differences?

We are seeing three stages of evolution in enterprises. The first stage is AI augmented, where the work is happening as usual, but AI tools and assistants are used to improve productivity and do it better. This is now very mature in most organizations, and adoption rate is high.

The next stage is where tasks are getting automated. For example, you can build an entire prototype through prompts — this has started happening across some organizations.

The third stage is about redesigning the process and ways of working to delegate work to agents and humans and complete it in a collaborative manner. This is still in the early stages, but we are finding that it will deliver the most value.

As enterprises move from experimentation to scaled adoption, our research shows that organizations with strong experimentation cultures are far more likely to move AI projects from pilot to production (three-quarters of the most successful companies strongly encourage experimentation).

While there are regional differences, it is less about geography and more about whether an organization is building with intention.

What have you observed about enterprise AI trends or advancements that surprised you?

What’s surprised us the most is how quickly enterprises are moving from isolated pilots to orchestrated, multi-agent systems. Notably, among organizations successfully scaling AI, nearly two-thirds now formally track the link between psychological safety and AI performance, signaling a more systems-level approach to AI adoption.